After a week of agentic coding on my own credit card, here’s some notes, mostly for to organize my own thoughts. I don’t like term vibe coding, although most of this code is only cursorily reviewed as 29k LoC produced this week would be full-time job just to read through once or twice.

OpenCode Zen (free tier)

As of today, it has 3 models:

- Big Pickle (unknown, presumably older GLM?)

- Minimax M2.5 - 230B MoE model

- Trinity Large Preview from Arcee AI - 400B MoE model

I am not sure if they are quantified for economical reasons or not, but at least earlier free GLM 5 did not perform very well in my tests last week. Trinity did not impress me either (it seemed quite slow and less capable than Minimax), but Minimax I am using as my daily driver when I don’t need more powerful model.

OpenCode Go (beta)

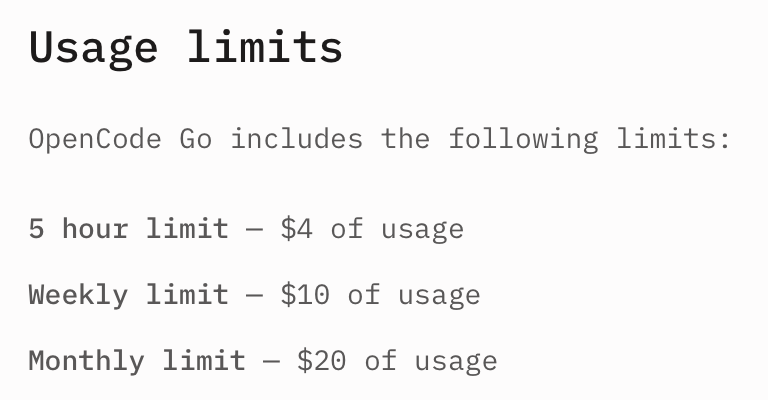

OpenCode has Go tier, which costs 10$/month, However, from their information page on Go:

So it is not that subvented. I am considering subscribing to it, though, as I sometimes hit the Minimax rate limit and who knows if I would like to use the other models they offer too.

So it is not that subvented. I am considering subscribing to it, though, as I sometimes hit the Minimax rate limit and who knows if I would like to use the other models they offer too.

OpenAI Codex (Free, Plus)

OpenAI does not have monthly quota, and I like it - during a week, I can switch focus on something else (thinking of other projects, improving others with others less capable models), and then when the quota resets, I can go all in on something new again.

Initially I tried using the free tier, but I ran out of weekly quota in 3 hours. Then I changed to Plus tier (23€/month in Europe), which has lasted me almost whole week. The latest 106 build of Codex CLI had a bug though (according to unsubstantiated comments on Reddit), and it started apparently using quota bit faster, but they reset the weekly quota ahead of time so perhaps the bug was real.

But this is where we get to the interesting part: For bit over 5€, how much usage did I get by using about 90% of my weekly quota:

ccusage_codex: aliased to npx @ccusage/codex@latest

The numbers are, to put it frankly, mind-boggling:

WARN Fetching latest model pricing from LiteLLM... @ccusage/codex 9:02:24 AM

ℹ Loaded pricing for 2651 models @ccusage/codex 9:02:25 AM

╭────────────────────────────────────────────────────────────────╮

│ │

│ Codex Token Usage Report - Daily (Timezone: Europe/Helsinki) │

│ │

╰────────────────────────────────────────────────────────────────╯

┌──────────────┬────────────────────────────────┬─────────────┬────────────┬────────────┬──────────────┬───────────────┬─────────────┐

│ Date │ Models │ Input │ Output │ Reasoning │ Cache Read │ Total Tokens │ Cost (USD) │

├──────────────┼────────────────────────────────┼─────────────┼────────────┼────────────┼──────────────┼───────────────┼─────────────┤

│ Feb 25, 2026 │ - gpt-5.2-codex │ 3,676,802 │ 634,523 │ 183,608 │ 79,072,256 │ 83,383,581 │ $29.16 │

│ │ - gpt-5.3-codex │ │ │ │ │ │ │

├──────────────┼────────────────────────────────┼─────────────┼────────────┼────────────┼──────────────┼───────────────┼─────────────┤

│ Feb 26, 2026 │ - gpt-5.3-codex │ 8,538,360 │ 1,066,184 │ 324,767 │ 166,454,016 │ 176,058,560 │ $59.00 │

├──────────────┼────────────────────────────────┼─────────────┼────────────┼────────────┼──────────────┼───────────────┼─────────────┤

│ Feb 27, 2026 │ - gpt-5.3-codex │ 12,774,997 │ 1,571,687 │ 471,054 │ 211,944,960 │ 226,291,644 │ $81.45 │

├──────────────┼────────────────────────────────┼─────────────┼────────────┼────────────┼──────────────┼───────────────┼─────────────┤

│ Feb 28, 2026 │ - gpt-5.3-codex │ 774,063 │ 171,456 │ 57,083 │ 23,280,640 │ 24,226,159 │ $7.83 │

├──────────────┼────────────────────────────────┼─────────────┼────────────┼────────────┼──────────────┼───────────────┼─────────────┤

│ Mar 01, 2026 │ - gpt-5.3-codex │ 588,368 │ 89,101 │ 32,095 │ 18,056,704 │ 18,734,173 │ $5.44 │

├──────────────┼────────────────────────────────┼─────────────┼────────────┼────────────┼──────────────┼───────────────┼─────────────┤

│ Mar 02, 2026 │ - gpt-5.3-codex │ 1,196,447 │ 199,528 │ 71,832 │ 26,055,680 │ 27,451,655 │ $9.45 │

├──────────────┼────────────────────────────────┼─────────────┼────────────┼────────────┼──────────────┼───────────────┼─────────────┤

│ Total │ │ 27,549,037 │ 3,732,479 │ 1,140,439 │ 524,864,256 │ 556,145,772 │ $192.32 │

└──────────────┴────────────────────────────────┴─────────────┴────────────┴────────────┴──────────────┴───────────────┴─────────────┘

It seems that the bit over 5€ is really bit over 200$ of tokens available, based on the current off the shelf pricing. Codex also seems to use cache quite effectively (95% hit rate or so). These tokens got my 3 new projects to quite healthy start:

- 2/3 of fingon/proprdb: PROPRDB (PROtobuf PackRat DataBase) (the rest was done with Minimax M2.5)

- Most of not yet published ‘lifelog’ Go project (Python -> Go conversion of old codebase dating to 2014-2019). The plan is to open source it when I don’t make any backwards incompatible changes to the protobufs and it has worked fine for me, but we shall see when that happens.

- Secret new iOS app 😀

Why not Claude plan?

I have used Claude professionally for quite a while, and I figured it is time for change of pace. I also heard good things about Codex, and so far it seems to be pretty decent. I might experiment to see how long the Claude plans last under heavy use at some point though (per token it is too expensive hobby).

Models and cost of using them per token

Note: All prices are from openrouter.ai as I am not motivated enough to look at providers’ own prices, but I assume they are not substantially more expensive. Cost is per million tokens, cache prices I’m omitting (they seem to be 1/10 or 1/20 of normal input tokens - which is good business, as they’re actually cheaper to provide, but perhaps I will write about it someday).

- Claude Opus 4.6

- <200k context: $5 input, $25 output

- 1M context: $10 input, $37,5 output

- Claude Sonnet 4.6

- <200k context: $3 input, $15 output

- 1M context: $6 input, $22,5 output

- MiniMax M2.5 (200k context): $0,3 input, $1,2 output

- OpenAI GPT 5.3 Codex (400k context): $1,75 input, $14 output

- Qwen 3.5 Plus (not open model, but seemingly not much better than the open weighted one)

- <256k context: $0,4 input, $2,4 output

- 1M context: $0,5 input, $3 output

- Qwen 3.5 397B A17B (256k context): $0,55 input, $3,5 output

- Qwen 3.5 35B A3B (256k context): $0,25 input, $1 output (cheapest - Alibaba is $2)

It is interesting to note that Qwen 3.5 Plus is actually cheaper than their largest open weights model. Another interesting thing is that their output token per second rates (according to openrouter.ai) are quite low, e.g. Qwen 3.5 397B has ~20-30 output tokens per second. Most of the paid high end models are in 50-100 range.

So what am I going to use going forward?

The answer will vary, of course, but at least for open source projects I will probably tinker with the opencode free tier, and/or cheap open models on openrouter. While running model locally is an option for me, I don’t have more than 32GB at home so anything larger than Qwen 35B A3B is not an option. And I don’t really see financially sane way currently to get e.g. 397B model running locally with good prompt processing speed - and for the record, Mac Studio M3 Ultra at least isn’t fast enough for me, as I am spoiled by the hosted models running on GPUs.

For my potentially non-open-source efforts (like the app I started sketching) I will most likely mainly use Codex, for the time being, although I hear good things about Gemini for frontend development so perhaps I will dip into it a bit as well using openrouter.ai. As I don’t want to use Googles development tooling, I don’t plan to even look at their subscription plan.

I am really looking forward to Codex Spark for implementing some things though - as it uses Cerebras chips, it should be an order of magnitude faster (even if bit stupider than default Codex), and that sounds lovely. My Plus plan doesn’t yet include it though, and they don’t communicate about its real availability yet (that I know of), but it might be enough for me to go for Pro tier too (which is 200€/month or something).

Some people claim also that LLMs are ‘almost no productivity gain’ or ’10% productivity gain’ but 29k lines of actually working code would have taken me in my prime perhaps 5-6 weeks, and I am not sure if I would feel up to that anymore, and that is not counting the iterations as the code has also changed quite a bit (I am trying to iterate on some ideas on how to do this stuff, and it is much easier to give prompt and wait an hour, as opposed to think hard for a day, write code for a week, and then find out idea sucked).

Anyway, back to hacking!