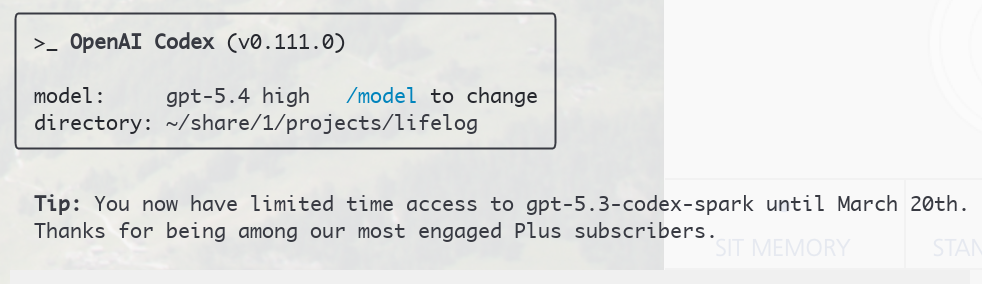

After brew upgrade (and GPT 5.4 announcement last night) I was excited to see what was up.

Interesting.

Interesting.

Try 1

I did one minor feature using planning mode (gpt-5.4 high) and implemented it using gpt-5.3 codex spark. Codex spark is backed by super-fast Cerebras megachips, so I was optimistic about the speed (if not quality).

Observations:

- REALLY fast (couple of seconds to implement something that was couple of hundred lines long)

- Bit stupid, or literal? It did not run unit tests by default

- Unit tests had an error, which it fixed

- It had used 62% of its context by this time(!) - seems like order of magnitude less context than 5.3 Codex

Try 2

Without planning mode, on high, I asked it to refactor logging in the app I am working on. It actually did what was asked for, but again did not run tests as I did not specifically ask for it to run them too. The outcome was also pretty ugly as it did not look at the API surface of the logging library (or know it), so I had to send gpt-5.4 after it to clean up.

After this, I realized that at least for me, gpt-5.4 doesn’t want to run tests either by default, guess I need to add some more instructions stuff to AGENTS.md

Try .. N ..

Even with AGENTS.md prompting, I could not get the spark to reliably run tests on every iteration. And in general it needed a lot more prodding. So it is definitely less proficient on its own, and also made number of errors (e.g. shell command escaping, invalid code), so while it produced ‘something’ fast, the something was frequently wrong too and required couple of iterations to get right.

I will perhaps experiment bit more with it going forward, but I am not that impressed with it so far. GPT 5.4 on the other hand seems like a solid model, although not substantially different from 5.3 Codex for what I use it for (Go, Swift coding mainly).