Background

I have been working on number of vibe coded apps recently after I gave up on my most recent startup, and before starting to work somewhere elsewhere. So I have done lots of projects I have never had the time for before.

HomeNetFlow

Lixie’s first iteration that I wrote by hand in 2024 ( see Observability at home ) was and is still useful. It is still only about categorizing log lines, by hand, and then having those rules applied to logs at scale using vector. My home infrastructure uses rules generated by it still, and I look at the logs quite often (that are filtered based on those rules).

The original idea was also to gather NetFlow data and to categorize connections in my home network. I even implemented some parts of it in 2024 (see e.g. Oh my god, it is full of containers!), e.g. dumping of netflows to my frankenrouters disk using nfdump, and dumping of DNS queries to the vector pipeline and subsequently to Grafana dashboard.

However, I never found the time to work on actual frontend for this, until this year.

I started working on the web app at end of March, and now about 3 weeks later it is mostly done, as far as I care to work on it.

The basic flow to create the data using tools in homenetflow repository is:

- Frankenrouter runs nfdump and stores the netflows for bridged interface across which most of my in-home traffic goes (and also out-of-home traffic for most part)

- When I feel like it, I copy the nfdump files over to my laptop and convert them to parquet format using

nfdump2parquet --src . --dst raw-parquet - Occassionally I download the logs to a specific directory (

lokileech --also-today --days 5) - With the available data, I create refined parquet files using

parquethosts --src-parquet raw-parquet --src-log logs --dst parquet( First part is pyinfra container, and I have Makefile which does the rest )

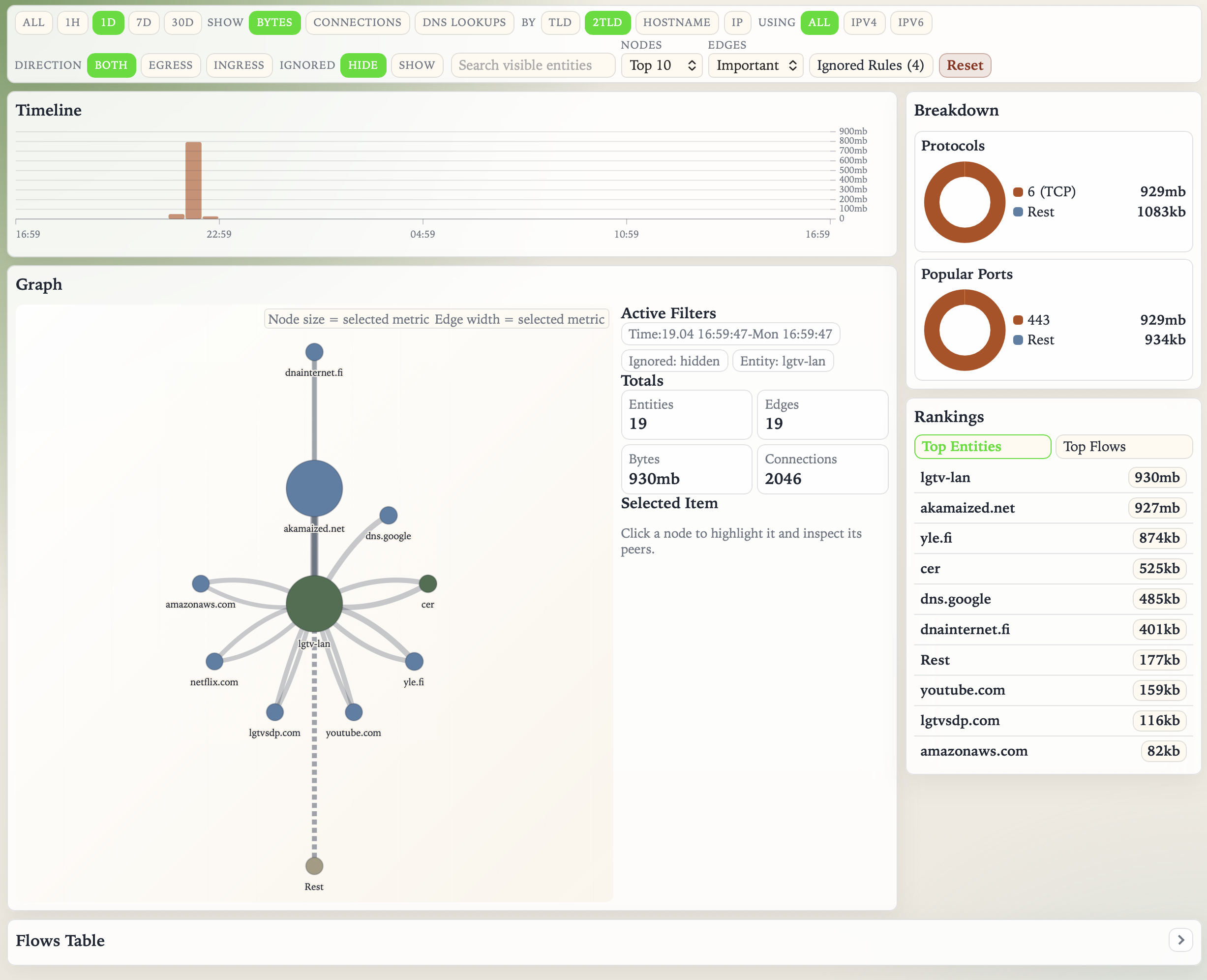

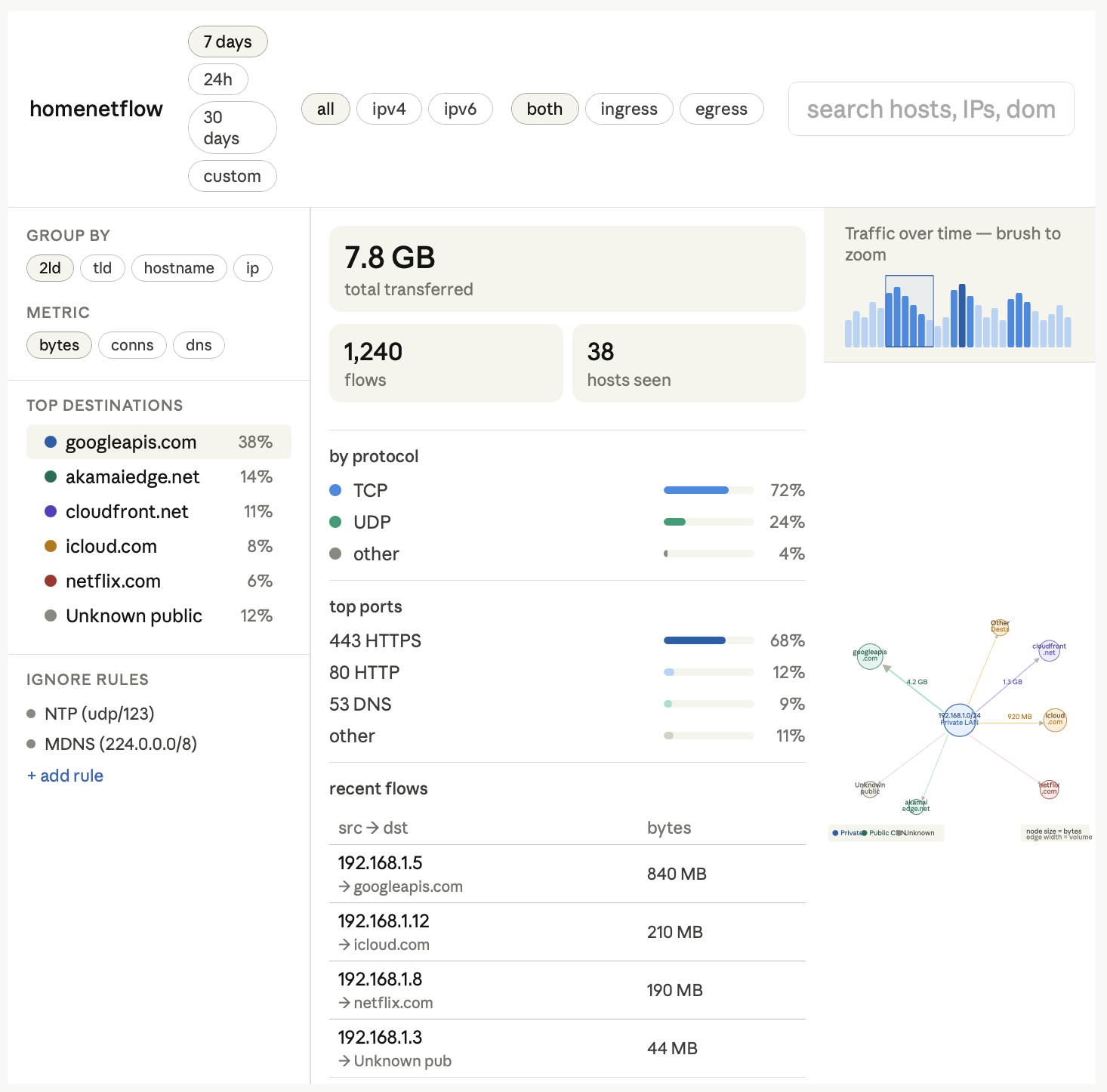

Then, the UI (started with make ui) can be used to browse traffic, it looks like this currently:

It was created entirely with Codex CLI (54 sessions, with handful of interactions each).

You can basically dig down to random things (in this example, what our TV has chatted with during the last 24 hours) you care about, and ignore things you don’t want to show (similar to rules Lixie has).

I actually found already one issue with it - it supports showing DNS requests by host, and it turns out that my OpenWrt 25.12 upgrade of frankenrouter had actually broken its ad blocking. Another thing I found out that our TV was talking to finnpanel.fi which is apparently some TV activity measurement agency, and one I definitely had not opted in to. So one more entry to the blocklist..

So that was HomeNetFlow. Second aspect of this is that while ^ is not pretty, what happens if I try to facelift the UI using LLMs?

Facelift project with different LLMs

Now that Kimi K2.6 came out, I decided to use that, Minimax M2.5, and GPT-5.4 to improve the look of the app. I gave all LLMs same prompt, and wanted to see what they would do. OpenCode was used as the harness, and all were implemented with first plan created, and then implementation of the plan was done separately.

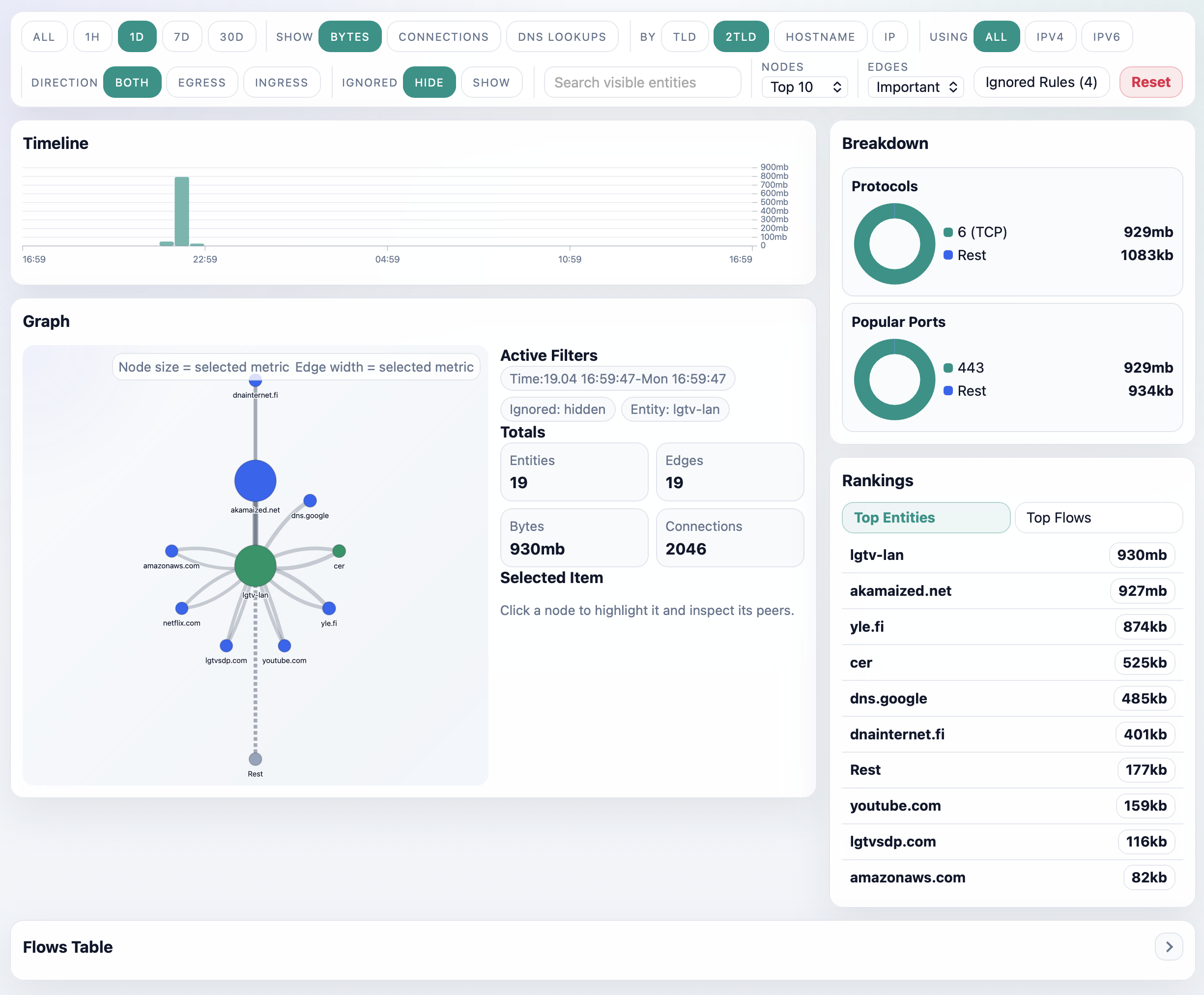

Kimi K2.6 version

Plan: Kimi K2.6 - Implementation: GPT-5.4 Mini

I think this looks actually the best. It is slightly better looking than original in some ways, although I am not sure if I am huge fan of the color scheme. Oddly when there’s 3 colors, e.g. TCP and Rest wind up same color though so there’s some logic error there.

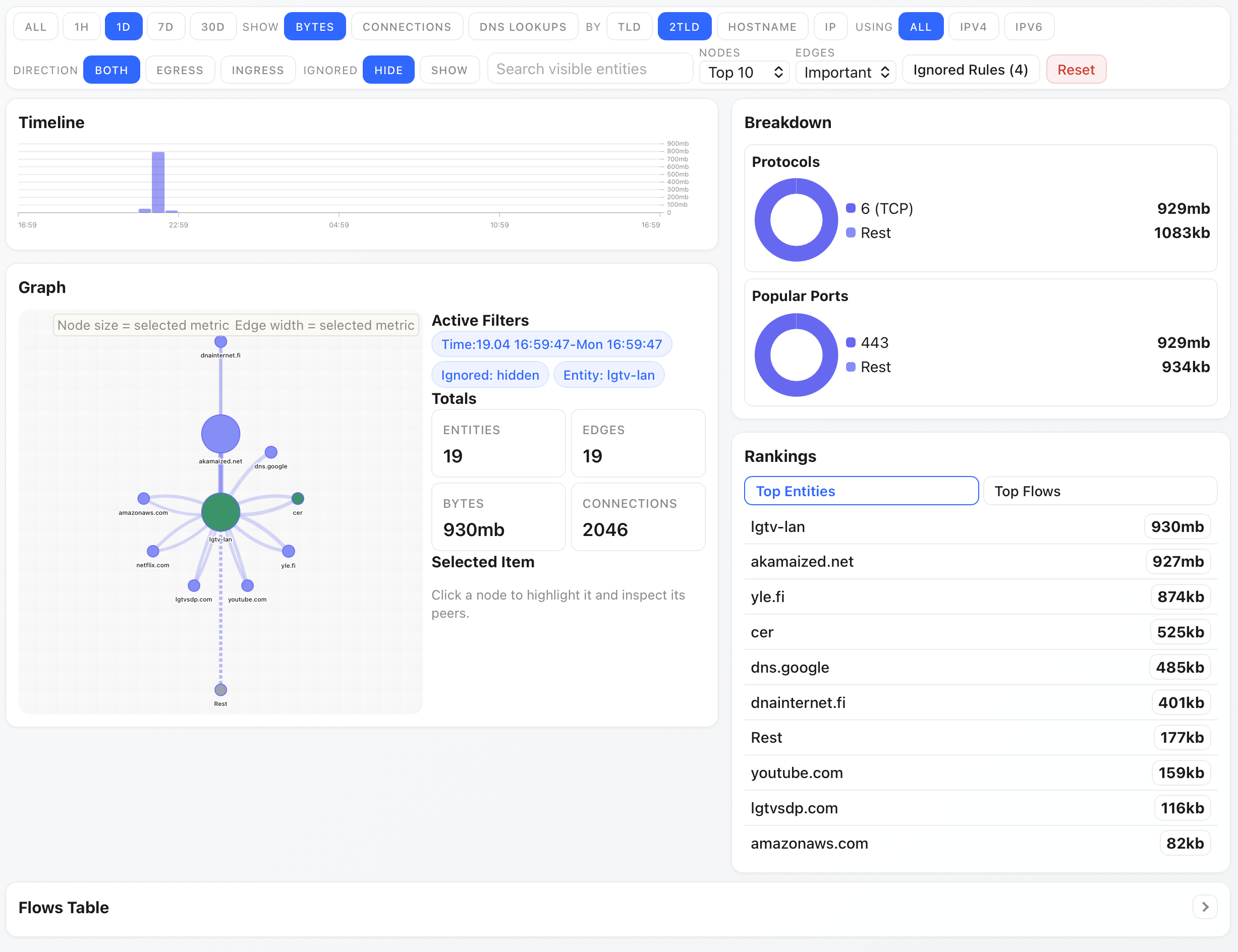

Minimax M2.5 version

I think this looks pretty reasonable too, although blue on blue breakdown is bit odd.

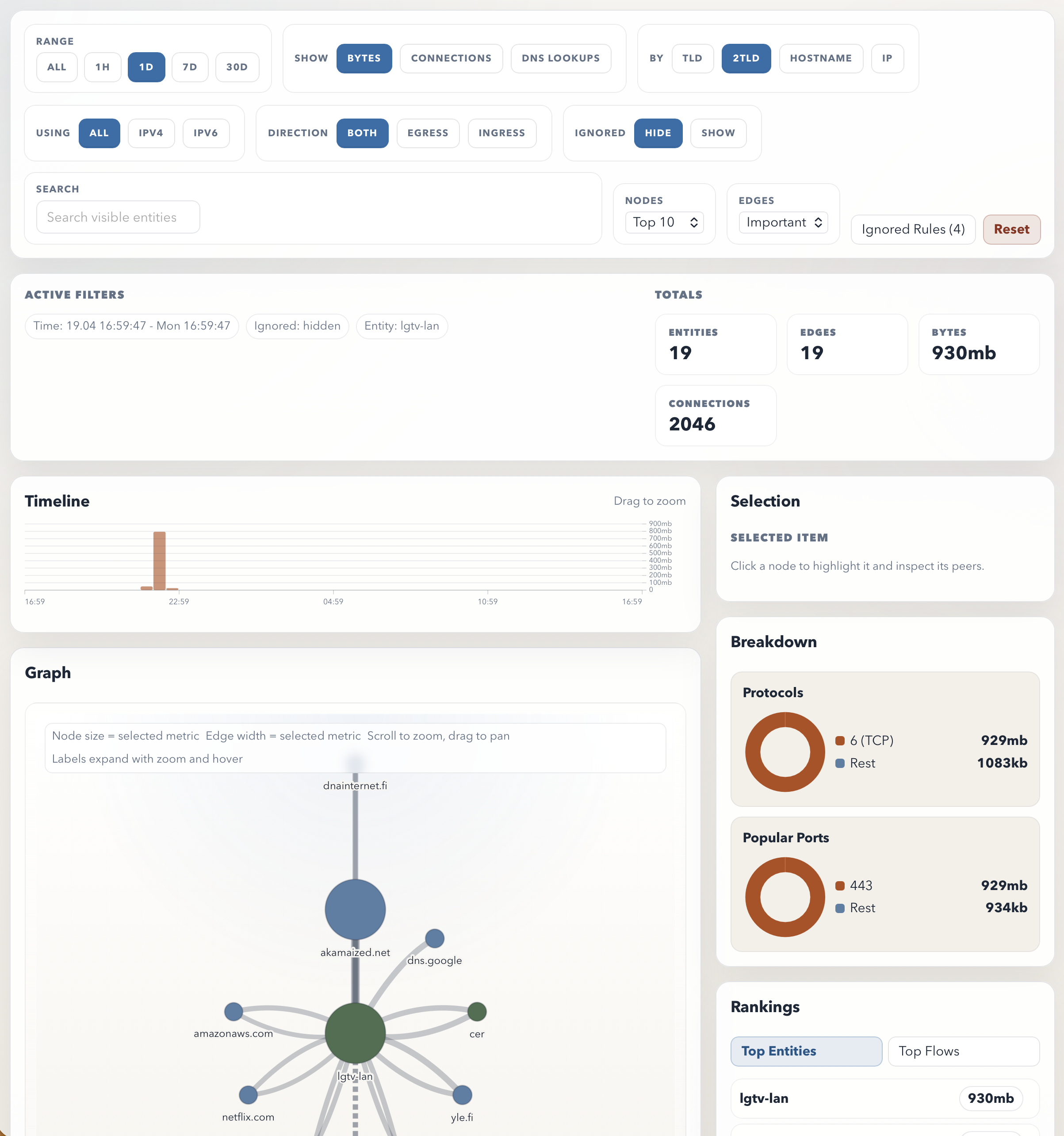

GPT-5.4

Unfortunately, GPT 5.4 actually just made the design worse. It no longer fits on screen, and I’m not sure why it did the changes it did.

Bonus: Claude sketch

I don’t currently have Claude Code subscription, but I asked Sonnet 4.6 to sketch what the UI would look like. I’m not sure I am that impressed by it either, although it is admittedly different looking than the current implementation.

Thoughts

I was somewhat underwhelmed by what the LLMs produced, but I think I handicapped them bit by giving the existing code as input as refactoring existing implementation is probably trickier goal for LLM as it will get grounded on existing choices, as opposed to creating new design from scratch.